Authentication vs. Authorization with OAuth, Does It Really Matter?

While in the security field the terms authentication and authorization have a clearly defined definition, with the introduction of concepts such as “delegated authorization” ambiguity might arise. However, it seems that we know what we intend and therefore should we even bother with such discussions?

Soon after delving into the OAuth specification it becomes evident that this framework has not been designed to be used for authentication, but rather for a so-called “delegated authorization”. Regardless which version is being used, the official specification says that OAuth allows users to share their private resources on one website with another website without sharing their credentials (D. Hardt, 2012). This feature can lead technicians and IT-architects to make bad security decisions around authentication issues when trying to adopt different OAuth flows in another context. For example, in their research Eric et Al. warned about the usage of OAuth with mobile devices since in these cases, the storage of the token is not secure or arbitrary redirects mechanisms are used. These mechanisms pose security issues if not handled correctly (Eric et. Al, 2014). However, the fact that this protocol is not based on username and password credentials might make it a good candidate for scenarios where there is no need for the users to authenticate themselves in the traditional way (e.g. passwords, tokens or biometrics). An example would be cloud-based applications. In general, IT service providers must comply with numerous data and access protection regulations. This means that all data that can be used to distinguish an individual’s identity either directly or indirectly, such as primary account number or address, must be put to proper safeguards in order to protect that data.

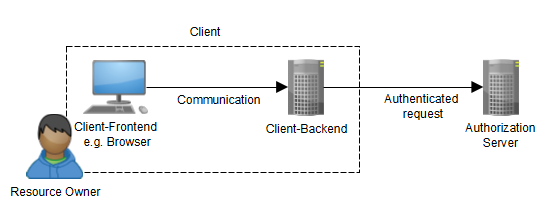

The major concern of OAuth is to define how the client can obtain an authorization grant from a Resource Owner in order to access a protected resource. As of OAuth 2.0, four grant types were introduced: the Authorization Code Grant, the Implicit Grant, the Resource Owner Password Credentials Grant and the Client Credentials Grant (D. Hardt, 2012). The specification is usually used in conjunction with three parties, the Client, the Authorization Server and the Resource Owner (Figure 1), but it may occur that the Client and the Resource Owner are the same. In that case, a 2-legged rather than a 3-legged OAuth protocol is at hand.

Figure 1: 3-legged OAuth.

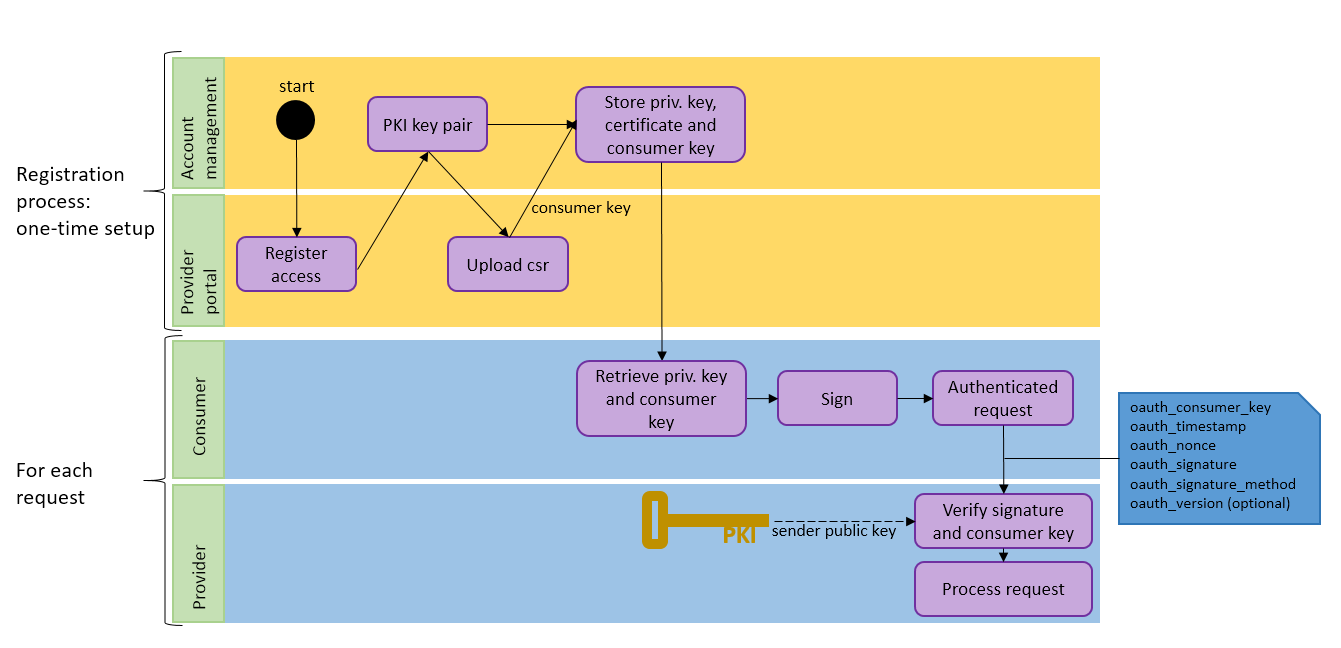

This latter usage can be very useful in a trusted environment such as for B2B or in a Cloud Context where a client needs to authenticate to the authorization server. Despite the fact that both OAuth 2.0 and OAuth 1.0a offer a 2-legged flow, OAuth 2.0 is de facto becoming the predominant standard for API authentication in providing an authorization framework for various clients. In addition, OAuth 2.0 also forms the basis for OpenID Connect, which organizes the way in which the Identity Provider and the Resource Provider communicate with each other (N. Sakimura et Al., 2014). The OAuth 1.0a 2-legged protocol does not bring identity with it, but its flow is easy to understand, adds different interesting security aspects and is straightforward (M. Atwood et Al. 2009). While the OAuth 2.0 Client Credentials Flow needs to access tokens (refresh and Bearer tokens) before starting a new session, OAuth 1.0a can be used in just one step to directly access the resource. Apart from some trivial parameters, such as the timestamp or nonce, for OAuth 1.0a the client must essentially deliver the digital signature and the consumer key - a static identifier generated at the time of the registration with the provider – to the server. The servers hosting the resource can perform authentication and data integrity checks against the signature and the consumer key. This feature also makes this specification very interesting for Cloud Providers where the consumer must solely store the consumer key and its own private Public Key Infrastructure (PKI) certificate safely.

OAuth has been designed as an authorization specification, although it could also be used as an authentication protocol in a context where APIs are exposed through agreements between the consumer and the provider. An understandable question that may arise is “why does it really matter?”. The data owner determines the information sensitivity level based on different factors such as financial, regulatory or privacy. The value of the data is linked to risks, which represent vulnerabilities. For example, the primary account number on a credit card might be classified as strictly confidential. The rule is that the higher the confidentiality and data value is, the higher the security requirements are for robustness. Therefore, data classification can affect the storage architecture, the data protection methods adopted for stored and in transit data as well as for data access (NIST, 2004; NIST, 2013). Since users these days are more concerned about data security, especially being aware about the off-site storage of data, a security policy must be designed and implemented in order to meet high data protection through strong authentication.

In an OAuth context, an adequate strong security setup for authentication is needed for the client, the server and the data message itself in order to guarantee the Authentication, Authorization, and Accounting (AAA) security and data integrity concepts. OAuth 1.0a offers different security mechanisms for authentication: an HMAC Signature with Shared Secret, a Plaintext and an RSA Signature with PKI. The latter option is particularly interesting for a consumer that wants to leverage the PKI possibilities. For this scenario, the consumer that wants to obtain the credentials needs to register himself and upload his own public key to the provider. With his own private key, certificate and the obtained consumer key, the consumer is able to make authenticated requests, which can be verified by the provider (Figure 2).

Figure 2: OAuth registration and usage workflow overview.

Figure 2: OAuth registration and usage workflow overview.

Although the shared key approach may appear to be more straightforward and less complex compared to the PKI approach, due to the key generation, the PKI offers a good opportunity to cloud providers to offer self-registering portals where clients can upload their X509 public keys in order to have a centralized monitoring and enforcing point.

Behind the development of OAuth there are requirements from social media applications where users have been relieved from the password creation nightmare. Enterprise companies also recognized the capabilities of OAuth and started to adopt this standard in their identity management solution. Regarding the usage of Bearer tokens vs. Digital Signature, the controversy between OAuth 2.0 and OAuth 1.0a still remains (hueniverse, 2016). As we have seen, using OAuth in an authentication context rather than an authorization one, for which it was designed, is a sensitive issue. Therefore, for the sake of simplicity and security, it is worth considering the OAuth 1.0a 2-legged option for strong authentication in a B2B context.

References:

D. Hardt, 2012. The OAuth 2.0 Authorization Framework. [ONLINE] Available at: https://tools.ietf.org/html/rfc6749. [Accessed 13 May 2016].

C. Eric, P.Yutong, C. Shuo, T. Yuan, K. Robertand T. Patrick, 2014 OAuth Demystified for Mobile Application Developers. [ONLINE] Available at: http://research.microsoft.com/pubs/231728/OAuthDemystified.pdf. [Accessed 20 May 2016].

N. Sakimura et Al., 2014. OpenID Connect Core 1.0. [ONLINE] Available at: http://openid.net/specs/openid-connect-core-1_0.html. [Accessed 13 May 2016

M. Atwood et Al., 2009. The OAuth 2.0 Authorization Framework. [ONLINE] Available at: http://oauth.net/core/1.0a/. [Accessed 13 May 2016].

NIST, 2004. Standards for Security Categorization of Federal Information and Information Systems. [ONLINE] Available at: http://csrc.nist.gov/publications/fips/fips199/FIPS-PUB-199-final.pdf. [Accessed 13 May 2016].

NIST, 2013. Electronic Authentication Guideline. [ONLINE] Available at: http://csrc.nist.gov/publications/nistpubs/800-63-1/SP-800-63-1.pdf. [Accessed 13 May 2016].

hueniverse, 2016. OAuth 2.0 and the Road to Hell | hueniverse. [ONLINE] Available at: https://hueniverse.com/2012/07/26/oauth-2-0-and-the-road-to-hell/. [Accessed 20 May 2016].