“In the future security will be inconceivable without AI automation”

What AI governance structure have you implemented at the Federation of Migros Cooperatives? How will it be implemented across the entire Migros Group through the regional cooperative structure?

We have an established technology governance system at Migros. These technology bodies control our use of AI technology. As AI is comparatively new, however, we are keeping a very close eye on it.

“We only release new AI systems if we have no ethical, legal, or information security concerns.”– Dr. Lukas Ruf

How do you define the authorization and role concept for AI applications? Who decides who can use what?

Our technology governance system has defined a central support process. This is the framework within which we assess what is provided for whom.

What criteria do you use to assess the risk of new AI applications? How do you decide whether to approve or reject them?

The support process for our technology governance includes assessing economic efficiency, data protection, legal issues, information security and, in particular, ethics. We use the results of this assessment to decide whether or not a new AI application is approved.

“Finding the right balance between the benefits and risks of AI solutions is a constant challenge.”– Dr. Lukas Ruf

Do you work primarily with on-prem AI, or do you rely on cloud services? And if so, from which provider and why?

We use both cloud services and onprem AI, depending on what is required. We are both multi cloud and multi-solution users.

What training and awareness-raising measures do you carry out so that employees can use AI tools responsibly and in accordance with the rules?

Firstly, we train our employees regularly in data protection, compliance, and information security. Secondly, employees with special data processing responsibilities receive regular training on ethical issues. AI is one of the key topics in these training courses.

How do you define the right ratio of human-in-the-loop versus autonomy in AI agents?

Human-in-the-loop is a risk control mechanism if you do not fully trust the decisions of an AI. We are always very careful with new technologies and put data protection and the reliability of our processes first. That means we always have a human in the loop wherever AI is used today.

How do you ensure that your AI systems remain ethical, traceable, and compliant?

Our technology governance body examines new AI systems in detail. If no ethical, legal, or information security concerns are raised, our employees can use the approved AI systems in the defined use case without hesitation. If that is not the case, we will impose conditions that must be complied with. Or we don’t approve the AI system.

How do you deal with data protection, data quality, and model transparency, especially for sensitive applications in the Migros context?

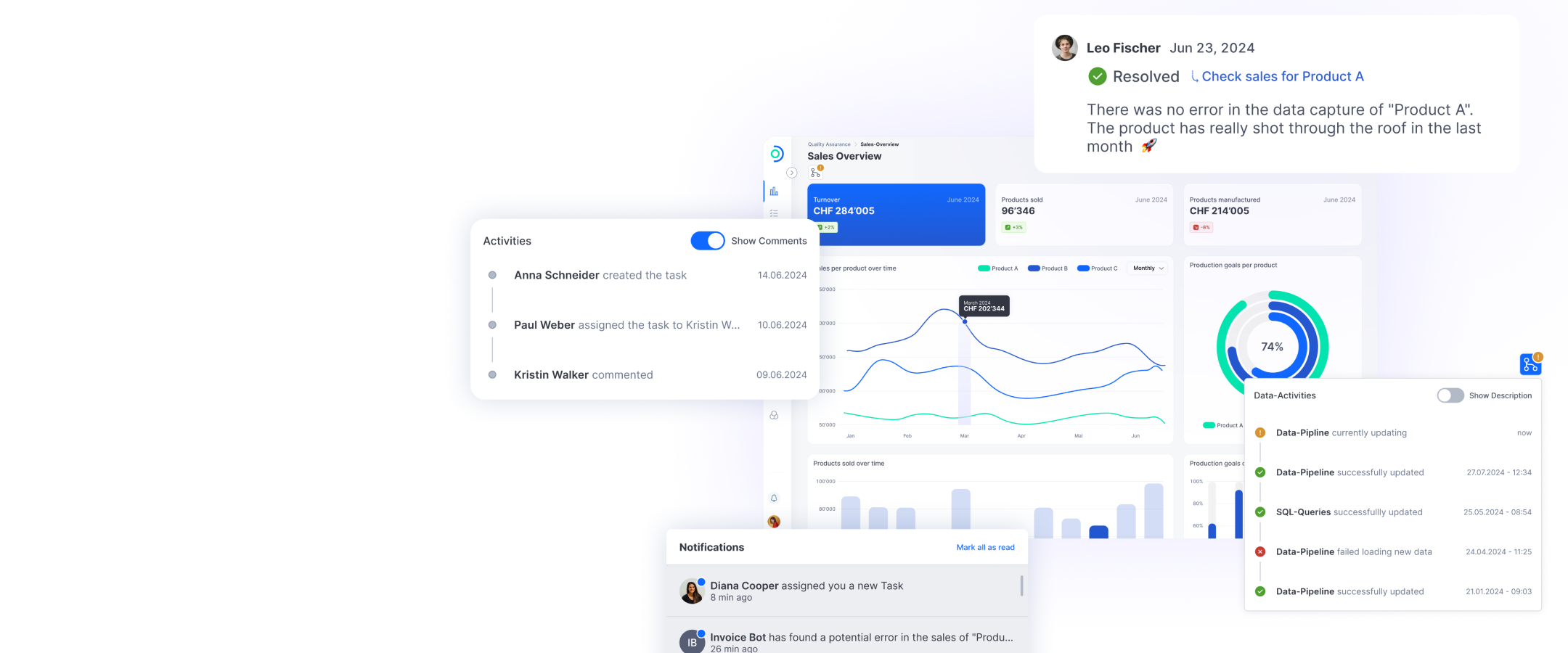

Sensitive applications in particular are assessed by our technology governance system. Findings, comments, and decisions are recorded in verifiable form. This enables us to set any changes in the technology in the context of our decision-making framework. As is probably the case everywhere, data quality is a continuous process.

How is AI changing the CISO role in the Migros Group – both for you and for your team?

AI helps us to implement further automation steps, but these can also harbor new risks. Finding the right balance between benefits and risks is a constant challenge. Without AI-supported automation, the burden of manually processing and checking suspicions and incidents will become unsustainable in the future.

Which AI tools do you currently use in security? What are the biggest challenges in terms of reliability and explainability?

We use our own AI platform, the Migros AI Foundation, to ensure that AI enhancements are used securely and cost-efficiently in our processes. With this as the basis, we can automate various process steps in our organization, for example in SOAR (Security Orchestration, Automation, & Response) or in vulnerability management. As we have not yet reached the point where we can leave decisions to the AI, though, a human in the loop is always integrated to assess decisions before they are executed.

ti&m Special “AI & Open Source”