“We need more courage in AI projects”

AI made in Lucerne // While tech giants are pumping billions into AI infrastructure, the Applied AI Center of the Lucerne School of Computer Science and Information Technology at Lucerne University of Applied Sciences and Arts is taking a different approach: Donnacha Daly, one of the Center’s co-founders, explains why open source could save our digital independence and why, paradoxically, the future of AI lies in smaller models.

Donnacha Daly, how does the Applied AI Center differ from other AI research institutions?

We deliberately focus on applied AI rather than purely theoretical research. Our focus is on specific use cases and creating value for the regional economy. The Applied AI Center brings together all the Lucerne School of Computer Science and Information Technology’s AI activities, from study programs and continuing education to applied research. Our aim is to help regional companies remain competitive by providing them with access to new AI technologies.

How do you implement this practical approach, in the financial or public sectors, for example?

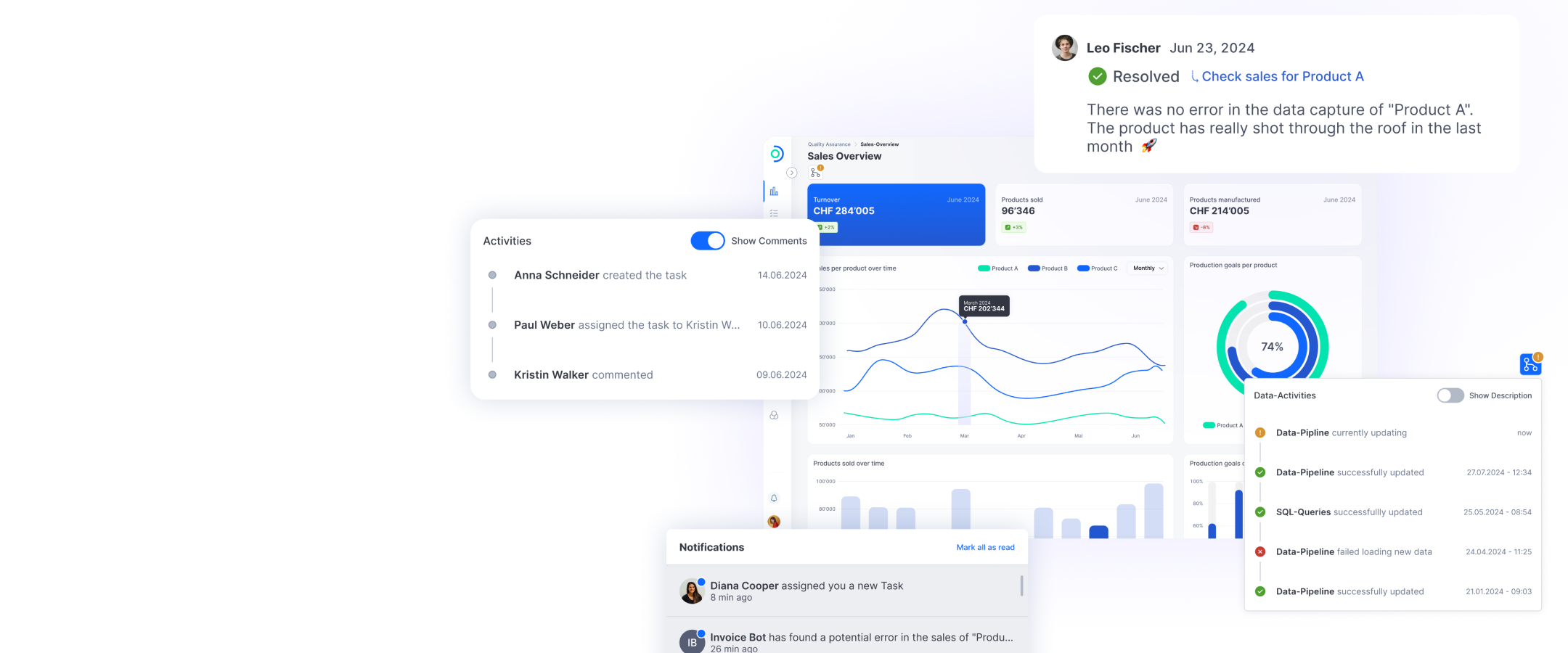

Most of the projects are subject to confidentiality, but we can mention a few. In the financial sector, for example, we have developed an AI-supported youth platform for Swiss cantonal banks under the direction of Professor Marc Pouly. The system recognizes debit card transactions that are eligible for cashback deals and pays out accordingly. We have also developed a transaction-based recommendation system that builds on this data flow.

In the public sector, we work closely with the canton of Lucerne. Together, we established the Local AI Community (LAC). This non-profit association leverages and accelerates existing AI capabilities for public and private stakeholders in the region. We offer continuing education in AI for various public bodies and organize strategic workshops, including our successful AI Breakfast series.

When it comes to AI, many companies immediately think of chatbots with company data. What other applications is the industry experimenting with?

The industry has been blinded by the success of large language models (LLMs) and overlooks the fact that AI is about much more than just chatting with data. These projects are actually among the less interesting examples of the work we do. It becomes much more exciting when companies are brave enough to use AI in their core products and services. We have already successfully implemented hundreds of applied AI projects. These include an AI-supported contract management system for a large Swiss law firm, a claims management engine for an insurance company, an algorithmic trading solution for a Japanese energy company, and an AI-supported situation analysis for a hearing aid manufacturer.

“The industry overlooks the fact that AI is about much more than just chatting with data. It becomes much more exciting when companies are brave enough to use AI in their core products and services.”– Donnacha Daly

What does digital sovereignty mean to you in the context of AI?

The barriers to entry for frontier models are huge. Neither Switzerland nor the EU have a seat at the table when it comes to infrastructure development in North America and China — from energy and data to computing capacity. These developments significantly undermine our digital sovereignty. We have been sending our data, our money, and our people to the USA for years. In my opinion, there are two trends that will help us: open source and the Law of Accelerating Returns. Open source enables us to host and use models such as Llama or Deepseek independently. The best open source models of today are better than the best private models of 18 months ago. The second trend is that better models lead to smaller models. This brings the technology back within the reach of European research and development.

How important are explainability and

traceability of AI systems for industry?

That’s a tricky issue. When AI makes a medical diagnosis or rejects a mortgage application, we want to know why. However, the deep learning models on which most modern AI systems are based are inherently “black box” systems, making them struc-turally unsuitable for explainability. This creates a dilemma: we have very powerful AI systems that could help solve many social and economic problems, yet we lack the tools to understand how they reach their conclusions. European regulators are right to reject high-risk applications. However, this does not change the fact that companies around the world are using AI wherever it can increase efficiency.

“Two trends make me optimistic. First, open source enables us to use powerful models such as Llama or Deepseek independently. Second, better models are getting smaller and smaller, which brings the technology back within the reach of European research and development.”– Donnacha Daly

What are your predictions for AI development?

I’m expecting a market correction in the short term. It feels like the year 2000 all over again: a lot of hype and overinvestment without the promised returns. The underwhelming release of ChatGPT-5 led many to question the scaling laws. But that doesn’t change the fact that we have seen a transformation in the capabilities of modern AI systems. Five years ago, developments in video and image generation or robotics of this quality and at this speed were unthinkable. I would venture two predictions. First, small language models will democratize AI within the next three to five years, decentralizing it away from hyperscalers. And second, household robots will no longer be a novelty in ten years’ time, whether developed by Apple, Tesla, or another provider.

The Applied AI Center of the Lucerne University of Applied Sciences and Arts (HSLU)

ti&m Special “AI & Open Source”