Language-based productivity — artificial intelligence as a copilot

Microsoft Switzerland // ChatGPT brings AI into the realm of human language, opening up new possibilities and sparking debates. The real added value is much less disruptive than you might think, as it in fact boosts productivity and satisfaction.

AI is referred to as a ‘disruptive’ innovation. This is rooted in truth, because AI enables people to interact with a machine using human language. But at the same time, using AI does not correspond to the ‘creative destruction’ of innovation. This is because AI applications embed themselves in existing structures and can improve them from the inside out. One thing’s for sure — it all depends on how we develop AI, how we use it and what frameworks we create. AI does not automate work, but rather rethinks how work can be made more efficient and at the same time more fulfilling.

The most natural interface there is

The current generation is the first to see AI play a central role in everyday life, starting with the launch of ChatGPT in November 2022. Why? ChatGPT opens up access to the opportunities of artificial intelligence via the most natural interface there is: human language. Human language plays a special role in this breakthrough, since the way we communicate with each other is an essential part of our human intelligence. Or as the Israeli historian Yuval Noah Harari put it: “Artificial intelligence has hacked the operating system of human civilization by becoming capable of language, recognizing correlations and helping to solve complex problems.” Harari’s statement sums up one side of the debate surrounding artificial intelligence. He takes a rather skeptical view and emphasizes the dangers of a technology that is developing at breakneck speed and seems to be putting increasing pressure on human intelligence. The other view is that held by ‘technology optimists’. They see technology and free markets as the primary driving forces behind progress and civilization. The fact is, artificial intelligence has only recently drawn level with humans in a wide variety of areas, and been able to recognize objects and answer questions adequately. Three factors are driving the latest wave of innovation in the field of AI: Firstly, the almost unlimited availability of data, which today is produced practically everywhere. Secondly, the exponential growth in computing power, available anytime and anywhere from the cloud. Thirdly, the way in which these two elements can be combined.

Working differently

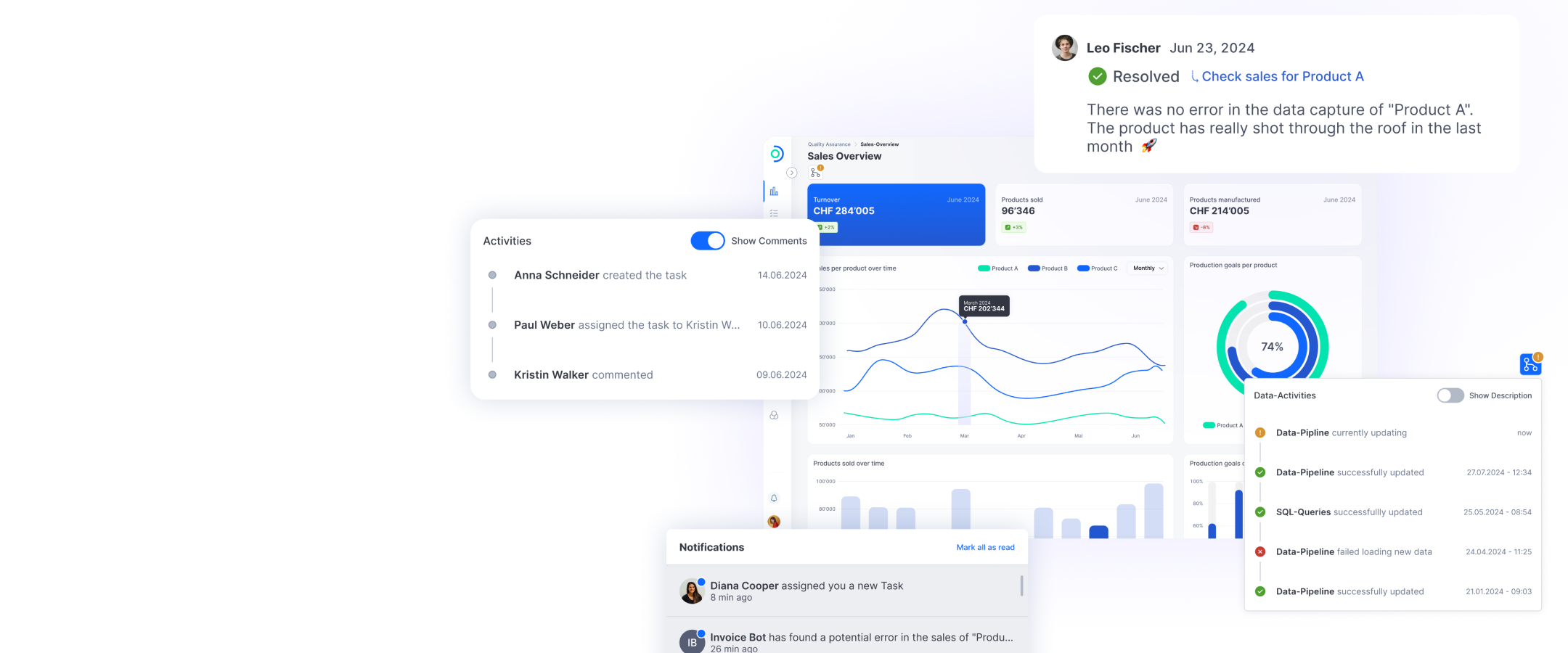

There’s a common denominator among the various applications of AI (such as ChatGPT) — they’ve all been successful. Never before have so many people used a form of technology in such a short space of time. ChatGPT reached 100 million users in just three months. AI technologies are also being used more and more frequently in the business environment. Over 75 companies and more than 100 start-ups are already using generative AI through Microsoft data centers in Switzerland. This can range from optimizing customer interactions to internal knowledge management and developing new products. Why? AI has a demonstrable business value. However, this value doesn’t stem from savings through increased automation or increased outsourcing of tasks. AI enables people to work more productively, successfully and with greater satisfaction. It acts as a kind of personal assistant for the user in all kinds of digital applications. Because when people can escape from repetitive or boring tasks, they can use their human ingenuity and focus on more strategic or creative tasks.

“The AI brain”

“Microsoft AI as your very own copilot”

Our responsibility

It’s equally important to understand AI for what it is — a kind of support, a source of inspiration, a correction engine or a decision-making aid. But people always remain front and center, and retain control. That is fundamental. And that’s why ‘responsible AI’, in the form of a development guideline for Microsoft, is right at the heart of the debate. This will ensure the development of systems, data, the model, the software and access to applications is secure and fair across the board. These aspects are crucial because AI systems are used to make important decisions that affect real people, such as in healthcare, and they can’t be perfect. People make mistakes and we live in an imperfect world. This means that these systems can also adopt the prejudices, untruths or shortcomings that exist everywhere. This approach to responsible AI primarily involves understanding the data used to train the systems to find ways to address these shortcomings.